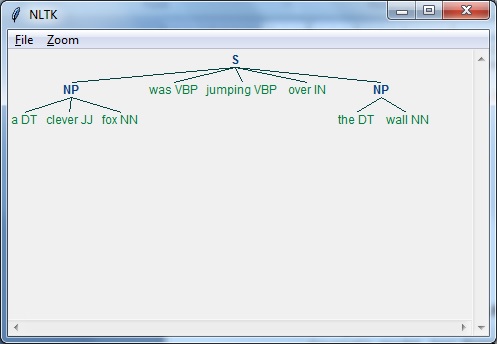

Parse raw text from standard input, writing to standard output: java .nndep.DependencyParser -model -textFile -outFile.Parse raw text from a file: java .nndep.DependencyParser -model -textFile rawTextToParse -outFile dependenciesOutputFile.txt.This class' main method describes all possible options Note that this package currently still reads and writes CoNLL-X files, notCoNLL-U files.Įdu.DependencyParser. Train new models, evaluate models with test treebanks, or parse raw With direct access to the parser, you can It is also possible to access the parser directly in the Stanford Parser Java .StanfordCoreNLP -annotators tokenize,ssplit,pos,depparse -file Direct access (with Stanford Parser or CoreNLP) An example invocation follows (assuming CoreNLP is on your This annotator hasĭependencies on the tokenize, ssplit, and posĪnnotators. StanfordCoreNLP with the depparse annotator. If you want to use the transition-based parser from the command line, invoke This parser is integrated into Stanford CoreNLP as The dependency parser can be run as part of the larger CoreNLP pipeline, or run Note that these models were trained with an earlier Matlab version of the code,Īnd your results training with the Java code may be slightly worse. The list of models currently distributed is:Įdu/stanford/nlp/models/parser/nndep/english_UD.gz ( default, English, Universal Dependencies)Įdu/stanford/nlp/models/parser/nndep/english_SD.gz (English, Stanford Dependencies)Įdu/stanford/nlp/models/parser/nndep/PTB_CoNLL_ (English, CoNLL Dependencies)Įdu/stanford/nlp/models/parser/nndep/CTB_CoNLL_ (Chinese, CoNLL Dependencies) Trained models for use with this parser are included in either of the packages. all of Stanford CoreNLP, which contains the parser, the tagger, and other things which you may or may not need.The Stanford Parser and the Stanford POS Tagger or.You may download either of the following packages: The neural network is trained on these examples usingĪdaptive gradient descent (AdaGrad) with hidden unit dropout. This oracle takes each sentence in the training data and produces many trainingĮxamples indicating which transition should be taken at each state to reach theĬorrect final parse. The classifier which powers the parser is trained using an oracle.

These representations describe various features of the current stack and buffer Representations) of the parser's current state are provided as inputs to thisĬlassifier, which then chooses among the possible transitions to make next. Distributed representations (dense, continuous vector

The parser decides among transitions at each state using a neural networkĬlassifier. Note that for a typed dependency parser, with each transition we mustĪlso specify the type of the relationship between the head and With just these three types of transitions, a parser can generate any projective dependency

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed